Each year we have the opportunity to change the photograph which appears on the cover of Computer Graphics Forum, journal of Eurographics, the European Association for Computer Graphics. Therefore we organize a competition for the Eurographics community to find next years cover image. This is your opportunity to show the world what you can do! Upload your images before December 31st of the running year. Each picture must have been produced with the aid of computer graphics and must be your own copyright. See the upload page for further details.

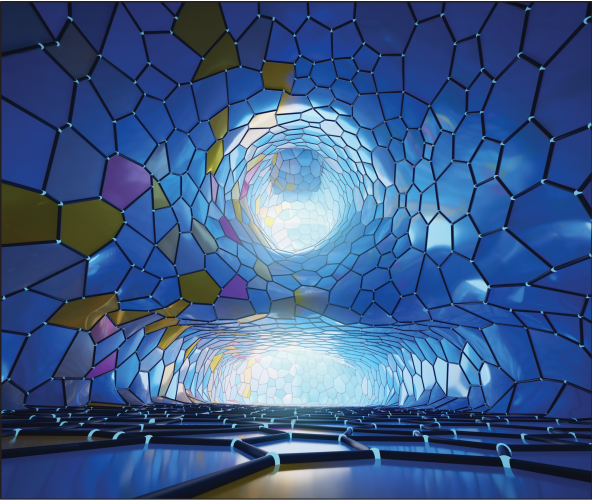

Winner 2025

Dennis Bukenberger

TU Munich

Lightweight design structures reduce costs and the environmental footprint of resource-intensive industries my minimizing material use, thus lowering energy consumption during operation. In our paper, we present a 3D material deposition strategy, based on an stress-adaptive Voronoi diagram. Site density is increased in regions of higher stress and reduced in less critical areas. The result is an efficient distribution of the constrained material budget (in this example 50 % of the solid structure), ensuring optimal mechanical performance. For this illustration, we modified the Sponza scene, replacing all load-bearing structures on the ground floor with our custom stress-optimized 3D Voronoi design. The image was rendered using Blender.

Winner 2024

Polygon Laplacian Made Robust by Dennis R. Bukenberger

This image shows the inside of the teaser figure ‘fertility’ from Polygon Laplacian Made Robust. It visualizes the condition numbers of individual polygon stiffness matrices. The mesh transitions from the input mesh to our result from left to right. The original mesh features disfigured polygons with alarming (yellow) or terrible (magenta) condition numbers. With our tailored smoothing algorithm, polygons become very regular and, combined with our improved polygon Laplacian, result in better condition numbers (blue) and improved robustness in computational simulation. Blender was used to stylize the geometry and render the image.

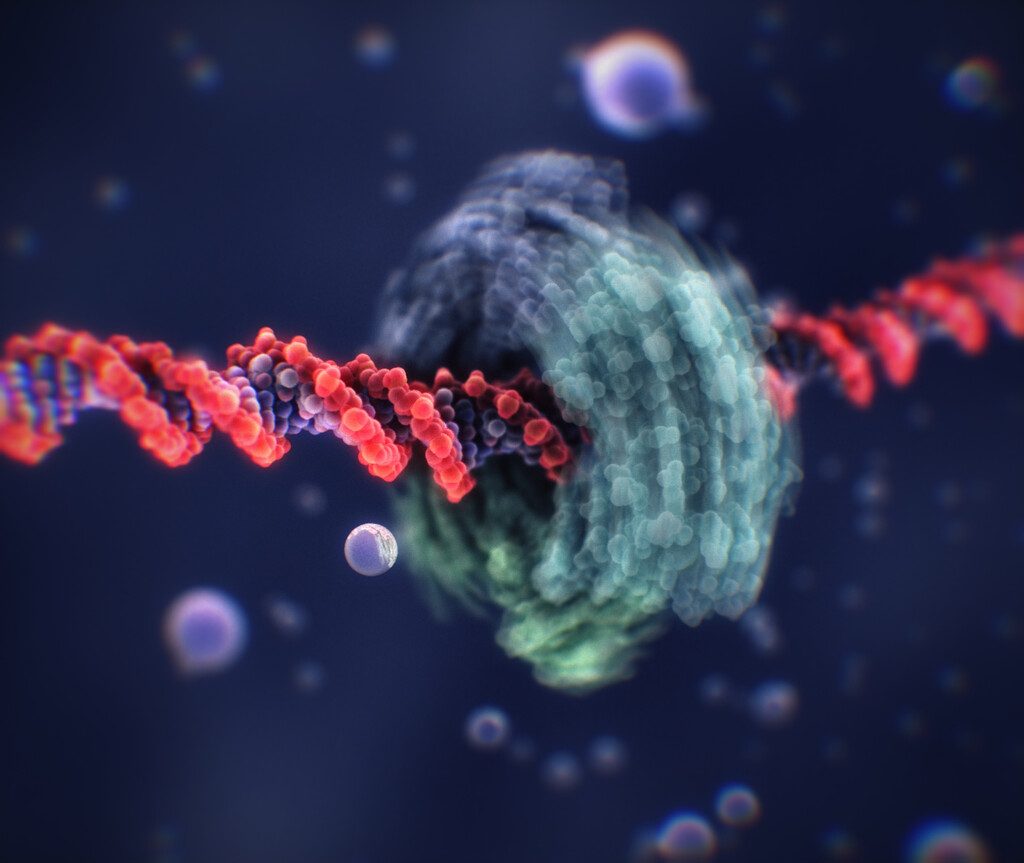

Winner 2023

Peter Mindek

Nanographics

This image shows a Proliferating cell nuclear antigen (PCNA) molecule sliding along the DNA in a rotation-coupled translation manner. It is part of an animation explaining the process of DNA replication. It was rendered by Marion/Vj real-time molecular animation framework. While the PCNA molecule is loaded from a PDB file (6TNY), the DNA is generated procedurally. The bubbles are generated in real-time as a post-processing effect, using a previously rendered frame to create the illusion of refraction. While light-interacting bubbles are unrealistic at the atomic scale, they serve as anchors helping with spatial orientation while the camera moves around in the 3D scene. The motion blur was created by averaging 30 frames of the real-time rendered animation.

Winner 2022

Yoshiki Kaminaka, Yuki Mikamoto, and Kazufumi Kaneda

Hiroshima University

The bubble flower found on the campus of Hiroshima University is a new kind of fantasy plant that has soap bubbles drifted in the wind, just as grasses carry their pollen by the wind. Those who see this flower will remember their childhood and are freed from the tiredness of their hard work.

The flower with thin-film petals was modeled using Blender. The film thickness of each soap bubbles is changed to express different appearances of the interference color. To realistically render the interference color of petals and soap bubbles, we have extended the PBRT renderer to allow full spectral rendering based on wave optics. This extension also allows image based lighting with high dynamic range spectral images. The high dynamic range spectral image was converted from an RGB image of the campus landscape using our newly developed spectral super-resolution method consisting of a deep neural network with ensemble learning.

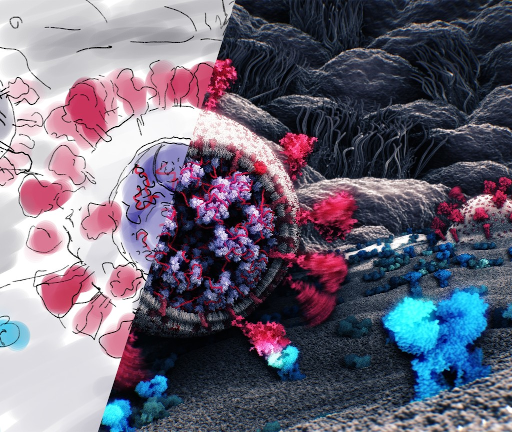

Winner 2021

Tatjana Hirschmugl (1), Tobias Klein (1), Ondřej Strnad (2), Deng Luo (2), Ivan Viola (2), and Peter Mindek (3)

(1) Nanographics GmbH

(2) KAUST

(3) Nanographics GmbH, TU Wien

The image shows the process of converting a scientific sketch into a 3D molecular visualization.We see a virion of SARSCoV- 2 whose spike protein has just attached to an ACE-2 receptor of an epithelial cell. To illustrate this process, the scene was roughly sketched by an illustrator. The sketch was then converted into a 3D visualization by using an atomistic model of the virion created by statistical modeling. The scene was then rendered in real-time by an impostor-based molecular visualization algorithm. The virion model is accompanied by the procedurally generated cellmembrane populated by lipids and protein molecules. The background, which shows epithelial cells and their cilia, was modeled and rendered in Blender and composed into the visualization to provide the viewers with a context.

Winner 2020

Károly Zsolnai-Fehér (1), Peter Wonka (2) and Michael Wimmer (1)

(1) TU Wien

(2) KAUST

This image shows the “Paradigm” scene from our paper named “Photorealistic Material Editing Through Direct Image Manipulation” paper. In this work, we presented a technique that aims to empower novice and intermediate-level users to synthesize high-quality photorealistic materials by only requiring basic image processing knowledge. Our method takes a marked up, non-physical image as an input and finds the closest matching photorealistic material that mimics this input. The entire process takes less than 30 seconds per material, and to demonstrate its usefulness, we have used it to populate this beautiful scene with materials.

Below, we explain three examples shown in this image (noting that the scene contains more synthesized materials). First, the gold material was “transmuted” from silver by editing the color balance of the image, second, the dirt material below was made by using image inpainting, while third, the vividness of the grass material was done through a simple contrast enhancement operation.

The geometry of the scene was created by Reynante Martinez.

For more information, the entirety of the paper and the described technique are available online.

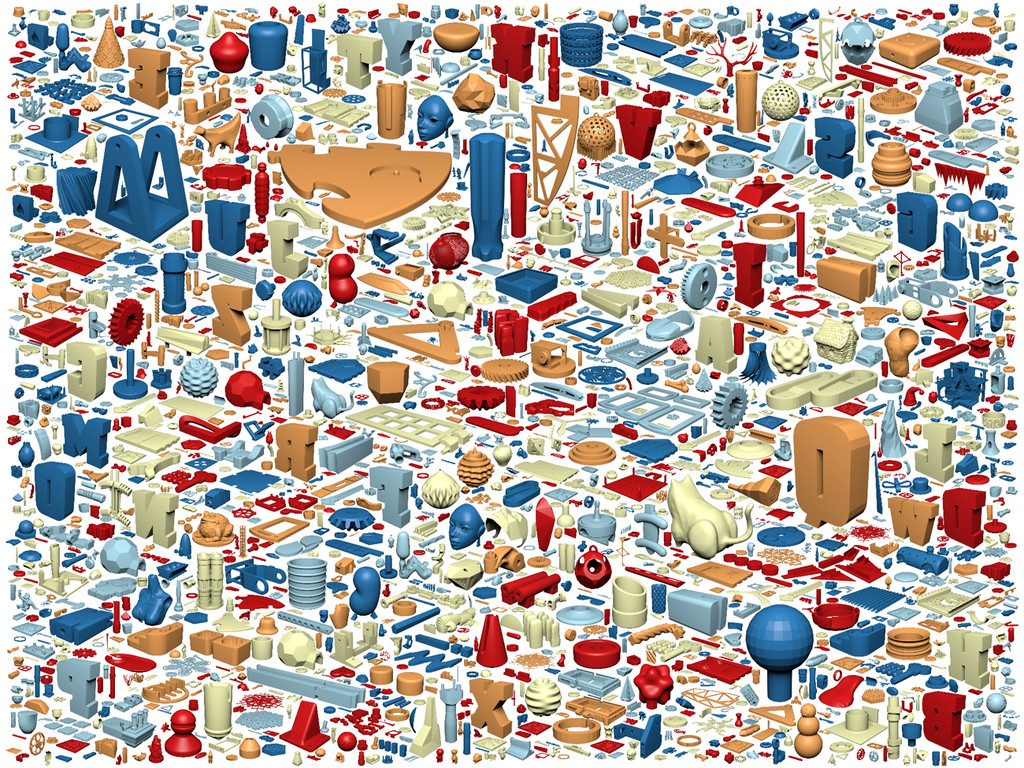

Winner 2019

Kai Lawonn (1) and Tobias Günther (2)

(1) University of Koblenz-Landau

(2) ETH Zürich

This image shows a triangulation of three ballet dancers at sunset. This stylization was obtained with our interactive and user-centered image triangulation algorithm, which is based on an interactive optimization that places triangles with constant color or linear color gradients to fit a target image. The efficient GPU implementation is described in the paper ‘Stylized Image Triangulation’ by Lawonn and Günther, DOI: 10.1111/cgf.13526.

Winner 2018

Qingnan Zhou (1) and Alec Jacobson (2)

(1) Adobe Systems Inc.

(2) University of Toronto

This image shows all 10,000 models that compose the Thingi10k “Thingi10K: A Dataset of 10,000 3D-Printing Models”, winner of the SGP 2017 Dataset Award. The dataset is publicly available and the provided web interface allows filtering on m